Compression PoC for Nokia proves 40x performance improvement

Case Study

Challenge

Nokia Airframe Group had encountered a performance bottleneck related to the software file compression of their storage application. They wanted to explore a hardware acceleration solution to compress and store 40 Gbps of raw data.

Solution

To help Nokia unburden their CPUs, Napatech designed a compression acceleration solution based on a Napatech SmartNIC, powered by FPGA.

Benefits

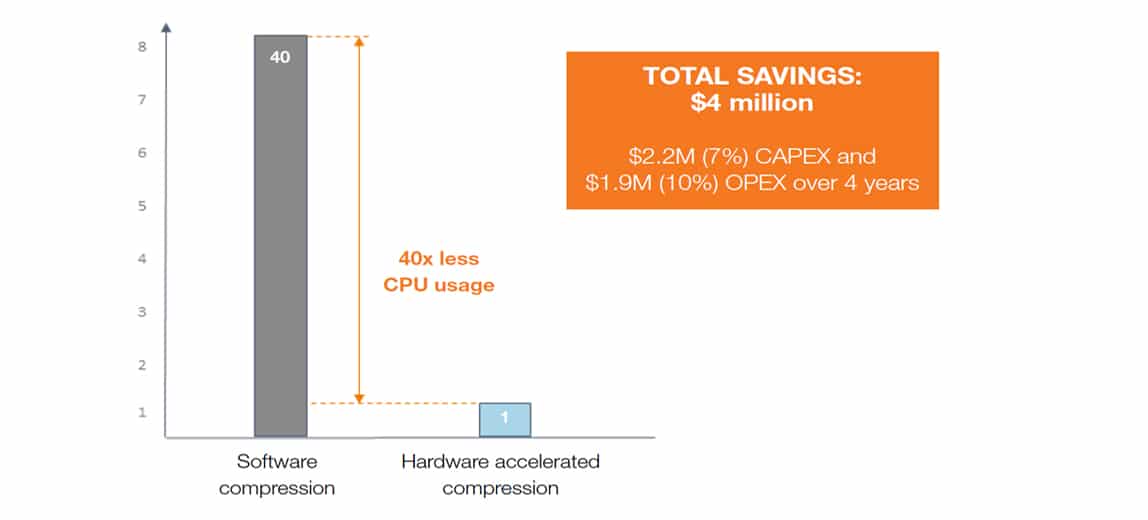

Results were outstanding: the hardware acceleration demonstrated 30x faster compression time and 40 Gbps sustained file compression for storage, using only 1 CPU core – a massive performance improvement with estimated CAPEX and OPEX savings of around $4 million in just four years’ time.

Industry pain points

While virtualization brings a series of obvious benefits, it also introduces new hurdles. The huge flexibility made possible by software (SW) must inevitably be weighed against the large consumption of resources required to deliver a performance level that matches hardware (HW). For SW compression, one issue is that the widely applied gzip format is overly CPU intensive. This imposes substantial speed limitations and drops the overall application performance.

Client challenge

Nokia is a global leader in creating technologies at the heart of the connected world, from enabling infrastructure for 5G and the Internet of Things, to emerging applications in virtual reality.

When Nokia encountered a performance bottleneck related to the SW file compression of their storage application, they wanted to explore a HW acceleration solution to compress and store 40 Gbps of raw data. This needed to happen on the fly, exploiting only a minimum of CPU resources. Napatech was engaged to develop the Proof of Concept (PoC).

“The PoC showed excellent results and truly underpinned Napatech’s dexterity in the virtualization sphere.”

Jari Ruohonen Sr. Product Manager, Nokia Airframe Group

Our solution

To help Nokia unburden their CPUs, Napatech designed a compression acceleration solution based on a Napatech SmartNIC, powered by FPGA. With this solution, the raw data would be sent seamlessly from the application to the HW accelerated compression engine running on the SmartNIC.

An advanced queueing system ensured that multiple compression tasks could be performed simultaneously. After compression, the file would be returned to the application with a selection of headers – gzip or similar – readable to the relevant SW. Next, the compressed file could be offloaded for storage.

Benefits

The performance improvements achieved with this solution were stunning: 30 times faster compression time and 40 Gbps sustained file compression for storage using only 1 CPU core. This was in contrast to the 40 cores utilized to achieve the same performance in SW only. With this solution in place, the data compression could be performed quickly and reliably – losing no information, even at high speeds. Over a period of four years, the CPU optimization compared to SW would be estimated to generate CAPEX and OPEX savings of around $4 million for a data center with approx. 10,000 servers.

Solution highlights:

• 40 Gbps sustained file compression/decompression for storage

• Only 1 CPU core used vs. 40 cores for SW only

• 40x improvement vs. SW compression

• 30x faster compression time

• High compression ratio typically up to 3:1

• Unique de-duplication ID per file

![]()