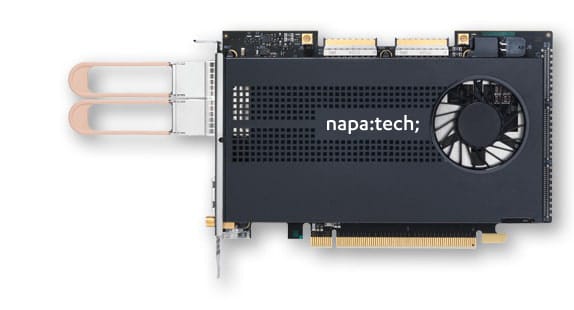

NT200A02 SmartNIC

with Link-Inline™ Software

2×100G

Data Sheet

NT200A02-SCC

NT200A02-NEBS

Cyber Security Processing Challenges

Network security architects are seeing requirements for their solutions quickly changing with the explosion in network throughputs while at the same time, the threat landscape is continuously evolving and becoming more complex and sophisticated. Stateless security solutions are no longer adequate to identify and block threats. Inline networking and security solutions require complete and stateful awareness of all users and applications.

To support these requirements, network infrastructures need to contain more intelligence with deeper inspection of traffic at increasing line rates. With this need for inline stateful flow processing, application awareness, content inspection, and security processing, the amount of compute power to meet these increasing line rates grows exponentially.

5G Telecom User Plane Processing Challenges

As Communications Service Providers scale up the deployment of their 5G networks, it becomes critical to optimize the Return on Investment for their infrastructure. With 5G User Plane Function (UPF) representing a significant part of the overall workload for the 5G Packet Core, it’s important to leverage solutions that maximize the number of users supported per server and thereby minimize the overall cost per user. The UPF applications must handle multiple actions per flow on each packet transferred from ingress to egress port. Actions include GTP encapsulation/decapsulation, MBR policing, DSCP tagging, charging info, and NAT. This imposes a high burden to the server CPU cores and there is a need for offloading server systems to achieve acceptable Return on Investment.

Stateful Flow Management and Offload

To maintain performance at high speeds and address challenges with cyber security and telecom user plane applications, Link-Inline™ software offloads packet and flow-based processing to reconfigurable FPGA-based SmartNICs. The SmartNIC performs flow classification and identification on ingress and maintains state for each packet of a flow. For known flows, action processing is dynamically handled completely in the SmartNIC and all other packets are forwarded to the application for additional analysis to minimize the load on user-space applications. Link-Inline™ Software additionally provides the ability to dynamically identify and direct data flows into specific CPU cores based on the type of traffic being analyzed. Link-Inline™ software is tightly coupled to x86 cores for optimized flow processing in demanding applications. Per-flow match/action processing in HW gives control back to the user providing additional computation to the application by reducing the amount of data needed for processing as certain flows or protocols that no longer need monitoring and can be blocked or forwarded in hardware.

Napatech’s Link-Inline™ software accelerates standard Linux applications and provides open APIs for development and integration of inline network applications. The solution significantly reduces host CPU utilization and solution latency by offloading complex flow classification and packet processing to the SmartNIC.

Stateful flow management

- Up to 140 million flow

- Learning rate: > 1.5 million flows/sec

- Flow match/actions:

- Drop

- Fast forward (inline / hairpin)

- RSS (CPU load distribution)

- GTP encapsulation/decapsulation (1k L2, L3 or L4 setups)

- Tunnel ID configured per flow

- MBR (Maximum Bit Rate) policing

- DSCP (Differentiated Services Code Point) tagging

- NAT (Network Address Translation)

- Flow mirroring

- Flow termination: TCP protocol, timeout, application-requested

- Flow records: Rx packet/byte counters and TCP flags, delivered to application at flow termination

- Configurable flow definitions based on 2-, 3-, 4-, 5- or 6-tuple

Pre-filtering

- Configurable 2-, 3-, 4-, 5- or 6-tuple, enabling up to 36,000 IPv4 or up to 8,000 IPv6 addresses

- 864 (32-bit) wildcard entries

- General purpose filters: Pattern match, network port, protocol

CPU load distribution

- Load distribution based on hash key, filter or per flow

- Hash keys, calculated on 5-tuple from inner or outer headers

Rx Packet Processing

- 128 Rx queues

- Multi-port packet merge, sequenced in time stamp order

- L2, L3 and L4 protocol classification

- L2: Ether II, IEEE 802.3 LLC, IEEE 802.3/802.2 SNAP

- L2: PPPoE Discovery, PPPoE Session, Raw Novell

- L2: ISL, 3x VLAN, 7x MPLS

- L3: IPv4, IPv6

- L4: TCP, UDP, ICMP, SCTP

- Tunneling support

- GTP, IP-in-IP, GRE, NVGRE, VxLAN, Pseudowire, Fabric Path

Tx Packet Processing

- 128 Tx queues

Network Standards

- IEEE 802.3 100G Ethernet

Supported pluggable modules

- 100GBASE-SR4, SR-BiDi, LR4

Supported OS and Orchestration

- Linux kernel 5.17 (64-bit)

- Kubernetes

Supported APIs

- DPDK v. 21.11.1, RTE_FLOW, RTE_METER

Hardware

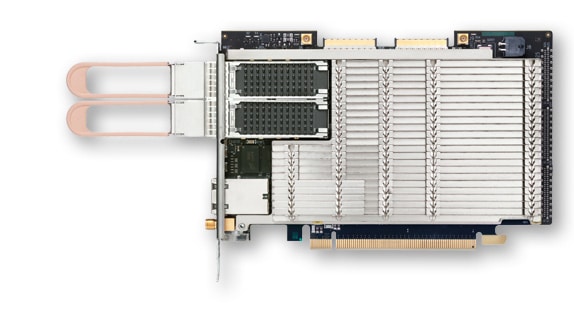

- Xilinx XCVU5P FPGA

- 12 GB DDR4 SDRAM

- PCIe Gen3 16 lanes @ 8 GT/s

- 2 × QSFP28 network ports

- RJ45-F 1000BASE-T IEEE1588 PTP

- SMA-F PPS input/output

- 2 × internal MCX-F PPS and NT-TS time sync

- Stratum-3 [1] compliant TCXO

- Flash memory with support for two boot images

- Physical dimensions: ½-length and full-height PCIe

- Weight excluding pluggable modules:

- NT200A02-SCC: 355 g

- NT200A02-NEBS: 350 g

- MTBF according to UTE C 80-810:

- NT200A02-SCC: 317,821 hours

- NT200A02-NEBS: 398,565 hours

- Power consumption including 100GBASE-SR4 modules and typical traffic load:

- NT200A02-SCC: 75 Watts

- NT200A02-NEBS: 75 Watts

Board Management

- MCTP over SMBus

- PLDM for Monitor and Control

- Built-in thermal protection

Monitoring Sensors

- PCB temperature level with alarm

- FPGA temperature level with alarm and automatic shutdown

- Temperature of critical components

- Individual optical port temperature and light level with alarm

- Voltage or current overrange with alarm

- Cooling fan speed with alarm

Environment for NT200A02-SCC (active cooling)

- Operating temperature: 0 °C to 45 °C (32 °F to 113 °F)

- Operating humidity: 20% to 80%

Environment for NT200A02-NEBS (passive cooling)

- Operating temperature: –5 °C to 55 °C (23 °F to 131 °F) measured around the SmartNIC

- Operating humidity: 5% to 85%

- Altitude: < 1,800 m

- Airflow: >= 2.5 m/s

Regulatory Approvals and Compliances

- PCI-SIG®, NEBS level 3, CE, CB, RoHS, REACH, cURus (UL), FCC, ICES, VCCI, RCM

Orderable port speed configurations

| Product | Data Rates included |

| NT200A02-2×100 | 2 × 100 Gbps |

[1] Stratum 3E compliant TCXO option supported by HW

![]()