As Communications Service Providers (CSPs) worldwide scale up the deployments of their 5G networks, they face strong pressure to optimize their Return on Investment (RoI), given the massive expenses they already incurred to acquire spectrum as well as the ongoing costs of infrastructure rollouts.

What will you do with all the money you save in your edge data center?

Driven by latency-sensitive use cases such as virtual RAN, IoT, Secure Access Service Edge (SASE) and Multi-Access Edge Compute (MEC), service providers and data center operators are increasingly deploying compute resources at the edge of the network. Whether these resources are located in traditional telco Points of Presence (PoPs), Converged Cable Access Platforms (CCAPs) or purpose-built micro data centers, they face common constraints in terms of hardware footprint and power consumption. At the same time, with thousands of edge data centers being deployed, operators are under pressure to ensure their data centers are as cost-effective as possible.

In this blog, we’ll review one emerging approach to addressing these challenges of footprint, power and cost in edge data centers, namely the process of offloading virtual switching from server CPUs onto programmable Smart Network Interface Cards (SmartNICs). Then, going beyond the purely technical merits of this strategy, we’ll discuss how to quantify the financial benefits of vSwitch offload. We’ll introduce an online calculator that you can use to determine just how much money you could save based on the specifics of your data center use case.

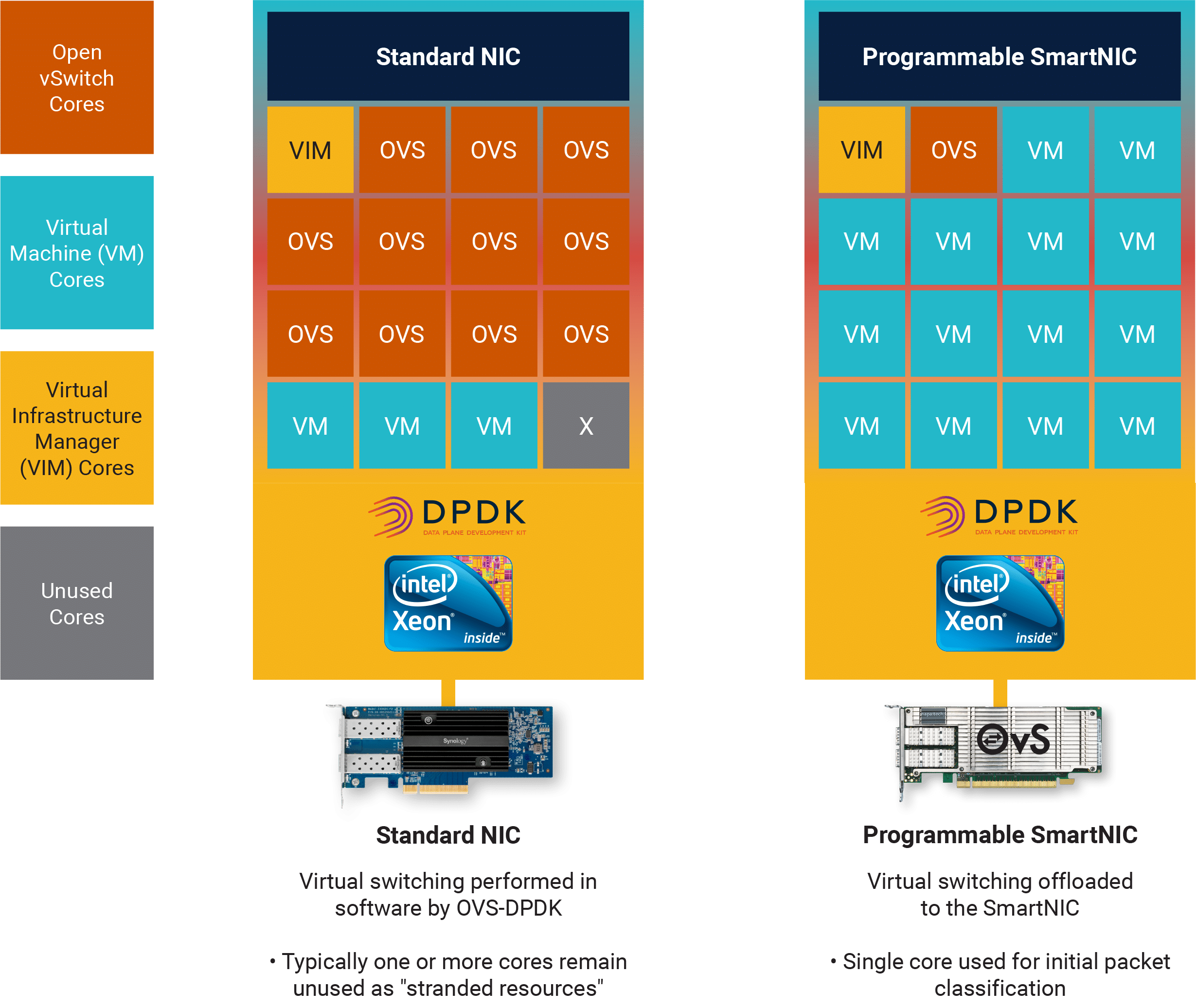

Within a typical edge data center, virtual switching is used to connect Virtual Machines (VMs) with both virtual and physical networks. In many use cases, a vSwitch is also required in order to connect “East-West” traffic between the VMs themselves, supporting applications such as advanced security, video processing or CDN. Various vSwitch implementations are available, with OVS-DPDK probably being the most widely used, due to the optimized performance that it achieves through the use of Data Plane Development Kit (DPDK) software libraries as well as its availability as a standard open-source project.

The challenge for solution architects is that, as a software-based function, the vSwitch has to run on the same server CPUs as the VMs. But it’s only the VMs running applications and services that ultimately generate revenue for the operator: no-one gets paid for just switching network traffic. So there’s a strong business incentive to minimize the number of cores consumed by the vSwitch in order to maximize the number of cores available for VMs.

For low-bandwidth use cases, this isn’t a problem: the latest versions of OVS-DPDK can switch approximately 8 million packets per second (Mpps) of bidirectional network traffic, assuming 64-byte packets. So if a VM only requires 1Mpps, then a single vSwitch core can switch enough traffic to support eight VM cores and the switching overhead isn’t too bad. Assuming a 16-core CPU, two vSwitch cores can support 13 VM cores (assuming that one core is dedicated to management functions).

But if the VM requires 10Mpps, then more than one vSwitch core is required to support each VM core and more than half the CPU is being used for switching. In the 16-core scenario, eight cores must be configured to run the vSwitch while only six, (consuming 60Mpps) are running VMs. And the problem just gets worse as the bandwidth requirements for each VM increase.

Using a significant number of CPU cores to run virtual switching means that more servers are required to support a given number of subscribers or, conversely, that the number of subscribers supported by a given data center footprint is unnecessarily constrained. Power is also being consumed running functions that generate no revenue. So there’s a strong incentive to minimize the number of CPU cores required for virtual switching, especially for high-throughput use cases.

Programmable SmartNICs address this problem by offloading the OVS-DPDK fast path. Only a single CPU core is consumed to support this offload, performing the “slow path” classification of the initial packet in a flow before the remaining packets are processed in the SmartNIC. This frees up the remaining CPU cores to run VMs, which significantly improves the VM density (the number of VM cores per CPU).

The diagram illustrates a use case, based around a 16-core CPU connected to a standard NIC, where eleven vSwitch cores are required in order to switch the traffic consumed by three VM cores.

Since there are not enough vSwitch cores available to switch the traffic to a fourth VM core, one CPU core remains unused as a stranded resource. This wastage is fairly typical for use cases where the bandwidth required for each VM is high.

Offloading the vSwitch function to a programmable SmartNIC frees up ten additional cores that are now available to run VMs, so the VM density in this case increases from three to ten, an improvement of 3.3x.

In both the standard NIC and SmartNIC examples, a single CPU core is reserved to run the Virtual Infrastructure Manager, which represents a typical configuration for virtualized use cases.

Once the above approach is used to calculate the improvement in VM density that’s achievable by offloading the vSwitch to a programmable SmartNIC, it’s straightforward to estimate the resulting cost savings over whatever timeframe is interesting to your CFO. All you need to do is start with the total number of VMs that need to be hosted in the data center, and then factor in some basic assumptions about cost and power for the servers and the NICs, OPEX-vs.-CAPEX metrics, data center Power Utilization Efficiency (PUE) and server refresh cycles.

To save time, there’s a new online calculator that walks you through the whole process. The first section uses your inputs on the server configuration and workload to calculate the VM density improvement that you can expect from offloading the vSwitch to a programmable SmartNIC. The second section produces estimated CAPEX and OPEX savings based on your data center-related assumptions. There’s even a link to reach experts who can help you with any questions about the tool.

Server CPUs are some of the most expensive individual components within an edge data center. As these data centers proliferate in support of innovative new applications and services, it’s important to make sure that those CPUs are used as cost-effectively as possible in order to maximize your overall Return on Investment (ROI). Offloading the vSwitch to a programmable SmartNIC can result in significant cost savings, so we encourage you to plug your assumptions into this model and see what the benefits could be in your specific use case.