As Communications Service Providers (CSPs) worldwide scale up the deployments of their 5G networks, they face strong pressure to optimize their Return on Investment (RoI), given the massive expenses they already incurred to acquire spectrum as well as the ongoing costs of infrastructure rollouts.

Solving the NFV Challenge: The Need for Virtualized Acceleration and Offloads

In my previous blog article, I talked about the history of NFV, the vision with the concept and the challenges in realizing them, particularly in the 5G network era. Following on, in this installment, I will be presenting the solutions that are required in order to overcome the challenges previously discussed.

The Solution: OVS Offload

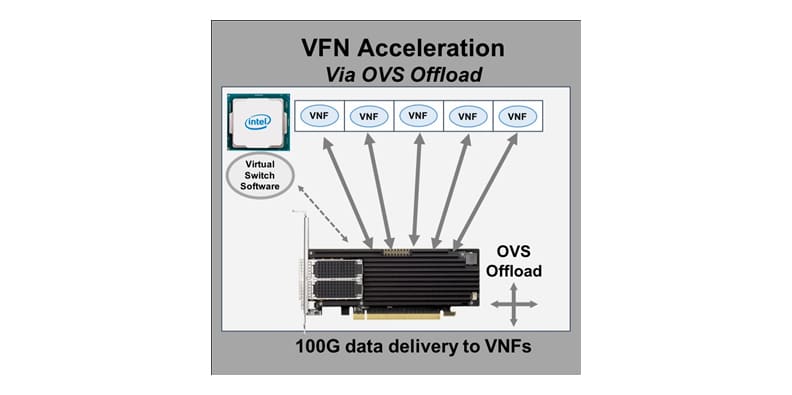

To meet the stringent requirements, operators have realized that to scale virtualized networking functions (VNFs) to meet performance goals requires data plane acceleration based on FPGA-based SmartNICs. This technique offloads the x86 processors that are hosting the varied VNFs to support the breadth of services promised.

Certain tasks are fundamental to running in software on CPUs, whereas others are horrible candidates for general-purpose processors as the instruction set is not optimized for the task(s). By embracing a workload-specific processing architecture that places the right processing workload at the right place in a system with the right technology, operators can dramatically decrease their total cost of ownership for an NFV solution by reducing the data center footprint that is required for a certain number of users. Obvious workloads that benefit acceleration and offload are networking and security related tasks such as switching, routing, action handling, flow management, load balancing, cryptography (SSL, IPsec, ZUC, etc.), compression and deduplication as examples.

SmartNIC acceleration of virtual switching proves to be the highest-performing and most secure method of deploying VNFs. Virtual machines (VMs) can use accelerated packet I/O and guaranteed traffic isolation via hardware while maintaining vSwitch functionality. FPGA-based SmartNICs specialize in the match/action processing required for vSwitches and can offload critical security processing, freeing up CPU resources for VNF applications. Functions like virtual switching, flow classification, filtering, intelligent load balancing and encryption/decryption can all be performed in the SmartNIC and offloaded from the x86 processor housing the VNFs while, through technologies like VirtIO, be transparent to the VNF, providing a common management and orchestration layer to the network fabric.

SmartNICs can transparently offload virtual switching data path processing for networking functions such as network overlays (tunnels), security, load balancing and telemetry, enabling COTS servers used for NFV workloads to deliver at their full potential. Additionally, FPGA-based SmartNICs are completely programmable, enabling fast new feature roll-outs without compromising hardware-based performance and efficiencies, and at the same time staying in lockstep with new features that may be required.

NFV Offload Architectures

There are numerous offload designs that are possible in this type of workload-specific processing architecture chosen based on the VNF application in question. The first and most fundamental design decision is whether the offload and acceleration are deployed via an “inline” or “look aside” model. When deployed inline, virtual switching and potentially other functions are tightly coupled with the network I/O. Data arrives at the SmartNIC, traverses the OVS data plane, and is demultiplexed via a flow-based match and action handling process.

Flow definition and the actions that can be applied to a flow are numerous and include, but are not limited to, forwarding to physical or virtual ports, packet manipulation, metering, QoS, load balancing, drop, redirect, mirror, or forward to an additional processing block of logic. With software programmable FPGAs, the virtual switch can forward flows to a subsequent software processing stage that can function as the user desires to apply additional processing stage to the data stream inline. Packets can be encrypted /decrypted, compressed/decompressed, deduplicated or can apply any custom workload that may be required to increase application performance and decrease latency – actions that are not a part of the standardized vSwitch capabilities.

Alternatively, when deployed in a look-aside model, after vSwitch processing, traffic is sent to the host processor where the VNF(s) are housed. The VNF application can then determine how to process the traffic. If additional offloads are required, traffic can be passed back to the FPGA-based SmartNIC or SmartCard where custom processing can occur on the traffic to offload the host processor.

NVF acceleration can be done in a transparent mode where the VFN is completely unaware that there is a SmartNIC accelerating data delivery via VirtIO to the application. Alternatively, in VNF Aware mode, the application itself can be made aware of the programmable acceleration technology and, via APIs, influence the data plane processing of traffic to provide additional offloads and accelerations based on the application itself influencing the data plane.

Demonstrations of this approach show the performance gains that can be achieved. An example is the industry’s first cloud-RAN solution that supports heterogeneous acceleration hardware and full decoupling of software and hardware. Compared to a software-only implementation, an accelerated SmartNIC-based solution achieves 10 times higher ZUC encryption throughput, three times higher PDCP system throughput and 20 times lower latency.

Conclusion

It is a foregone conclusion that gone are the days of fixed-function, hardened, expensive, slow-to-maneuver and costly-to-operate networking and security solutions. The technique to overcome the challenges that are facing NFV deployments requires reconfigurable computing platforms based on standard servers capable of offloading and accelerating compute-intensive workloads, either in an inline or look-aside model to appropriately distribute workloads between x86 general-purpose processors and software-reconfigurable, FPGA-based SmartNICs optimized for virtualized environments.

By coupling general-purpose COTS server platforms with FPGA-based SmartNICs that are capable of supporting the most demanding requirements, network applications can operate at hundreds of gigabits of throughput with support for many millions of simultaneous flows. With this unique architecture leveraging the benefits of COTS hardware for networking applications, the vision of NFV is not over the horizon but is clearly attainable.

To live in the world of software-defined and virtualized computing, without trading off performance, this reconfigurable computing platform architecture will allow companies to reimagine their networks and businesses by bringing hyper-scale computing benefits to their networks and deploy new applications and services at the speed of software.