As Communications Service Providers (CSPs) worldwide scale up the deployments of their 5G networks, they face strong pressure to optimize their Return on Investment (RoI), given the massive expenses they already incurred to acquire spectrum as well as the ongoing costs of infrastructure rollouts.

Using FPGA or NPU Technology for NFV

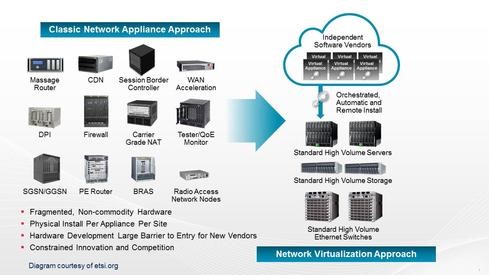

When the Network Function Virtualization (NFV) work started back in 2012 one of the fundamental motivating factors behind the NFV technology was the strong desire to move away from dedicated closed propriety solutions for each network function. The original idea was to replace the dedicated, closed propriety solutions with a generic open standard hardware platform, and run the network functions completely in software. A general consensus was that the generic open standard hardware platform could be any Intel or ARM-based server with a standard 1/10/40G Network Interface Controller (NIC) for delivering network traffic from the physical network to and from the virtualized network function.

Why look for alternative solutions?

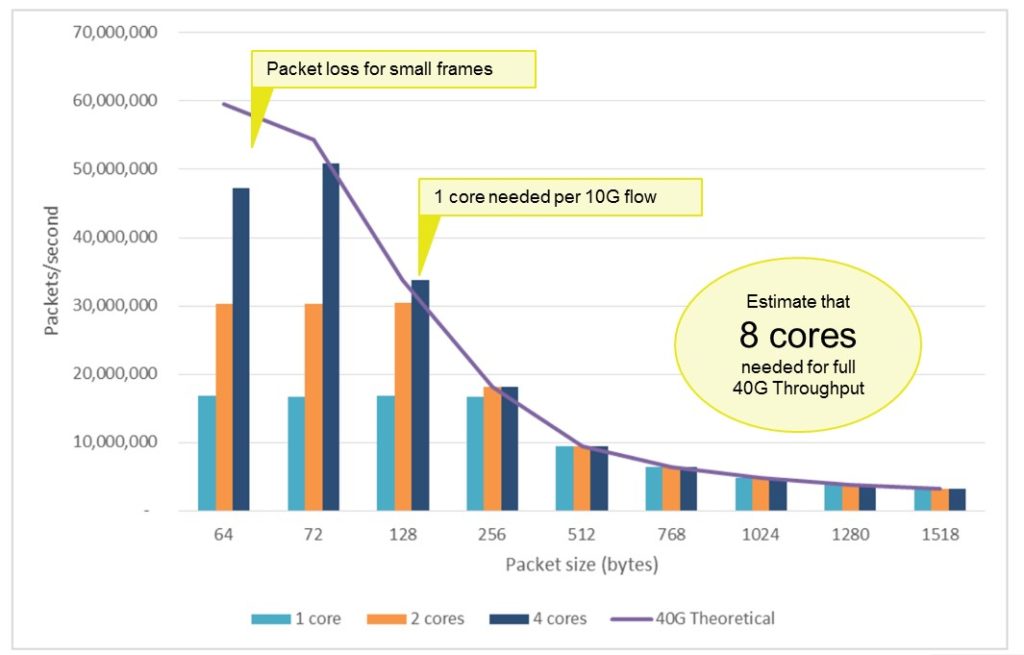

Lately we have seen people start to question whether the envisioned generic open standard hardware platform can deliver the desired outcome. Two significant challenges have been identified. Firstly, can we transfer all network traffic in and out of the virtualized network function? Secondly, how may CPU resources will we end up consuming just for transferring the network traffic to and from the virtualized network function? These two challenges are something we inherit from basing our network access on standard 1/10/40G NIC in a generic open-standard hardware platform.

What is the true impact of these two shortcomings in the real world? Now imagine we are moving a critical network security function to a virtual environment and by doing so, we are unable to guarantee the inspection of all network traffic. What if a security breach occurs in the network traffic dropped by the generic open-standard hardware platform because the traffic was not transferred to the virtualized network function? What about the CPU resources consumed in the transport of network data to and from the virtualized network function? Again, imagine we deploy a process-intensive network application in the NFV space. We will be spending valuable CPU resources on moving network traffic in and out of the already CPU-intensive network application.

Source: “Intel® Open Network Platform Server Benchmark Performance Test Report”, Oct 2015

FPGA or NPU Technologies as an alternative solution

We have seen companies address these potential obstacles by replacing the standard 1/10/40G NIC with solutions based on FPGA or NPU technologies. Both technologies can easily solve the performance limitation of the standard 1/10/40G NIC but with a significant increase in cost.

Standard 1/10/40G NIC’s are built for one purpose only and this translates to a very efficient chip design with no additional overhead for user re-programmability. At the same time, they are produced in extremely high volumes.

One thing going for the FPGA’s and NPU’s is that they are truly multi-purpose devices where to suit specific user needs almost everything is reprogrammed. This translates into a highly complex chip design where a significant amount of chip resources are required to provide the re-programmability. Compared to standard 1/10/40G NICs, these are manufactured in considerably smaller volumes.

So why are we talking about chip design complexity and manufacturing volume? Because they are the primary parameters in determining the cost of a device. An efficient chip design combined with manufacturing in high volumes equals lower product cost. Obviously the 1/10/40G NIC will always have a cost advantage over solutions built on FPGA or NPU technology.

Aviable business case for utilizing FPGA technologies in NFV

Now to the real question at hand. Can we build a viable business case for utilizing FPGA or NPU technology in the generic open standard hardware platform needed for NFV?

Can we justify the higher cost of FPGA or NPU devices by the performance improvements we achieve compared to a standard 1/10/40G NIC? Alternatively, should the FPGA and NPU vendors match the price of a standard 1/10/40G NIC?

I strongly believe a viable business case can be built, but it will require compromises from all the involved parties.

First, the NFV industry must realize that the generic open standard hardware platform based on an Intel or ARM processor combined with a standard NIC has severe performance limitations. In my opinion, this is happening now and the NFV industry is beginning to understand these limitations, which will jeopardize one of the vital ideas behind NFV. A virtual network function must have the same capabilities and performance metrics as its counterpart in the physical world.

Secondly, companies offering solutions based on FPGA or NPU technologies are today typically operating a low volume high margin business model. They need to adopt their business model to also support lower margins and significant higher volume in order to service the NFV industry. It will require a close co-operation with the FPGA and NPU suppliers, as majority of the cost for this solution goes towards the device itself. Scaling a business is not a trivial task, but if we look back, this has been done many times and I don’t see why it can’t be done again.

Finally, the FPGA and NPU vendors must acknowledge that to become the technology supplier for the networking part of the NFV generic open standard hardware platform, they must provide more affordable and cost-efficient devices. Today, the average cost per unit of a FPGA or NPU device is almost 10 times the cost of a standard 1/10/40G NIC device. I believe that a 10x higher price will ruin the business case for using FPGA or NPU technologies in NFV, but I really believe we will see much smaller multiples when the FPGA and NPU vendors realize the potential of the opportunity in front of them.

To conclude, I would like to state that only time will tell if a viable business case exists for using FPGA or NPU technology to overcome the shortcomings of the standard NICs in the generic open standard hardware platform the NFV dream is built upon.

Interested in NFV? Read more on our blog or visit SDxCentral.

Read our posts on Smarter Data Delivery.