As Communications Service Providers (CSPs) worldwide scale up the deployments of their 5G networks, they face strong pressure to optimize their Return on Investment (RoI), given the massive expenses they already incurred to acquire spectrum as well as the ongoing costs of infrastructure rollouts.

Packet Capture for Cyber Security

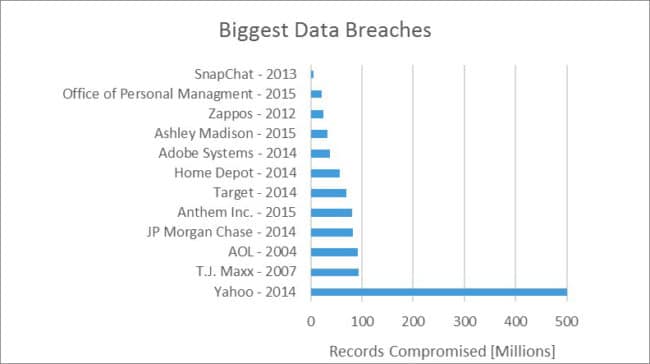

Cyber security attacks are on the rise and lately we have learned about a new major data breach at Yahoo, where they apparently lost some undisclosed information on 500.000.000 (Source: Businesswire.com) of their user accounts. We are still missing detailed information on exactly what happen at Yahoo, but we know from previous data breach incidents, such as the Target case, that the investigation will be complex and time-consuming.

For cyber security attacks there isn’t the traditional physical crime scene where evidence can be collected from for investigations. Instead, we are facing a crime scene built from a complex structure of servers, networks and applications, scattered around many different geographical locations.

Forensic investigation of a cyber security attack is also challenged by the fact that servers, networks and applications only provide a partial and reduced set of evidence. E.g. a log file from a server can show the health of the server and applications running at any given time, but it will not be able to tell exactly what information was exchanged with other servers, networks or applications. Similar arguments can be made for log files originating from networks and applications.

A way to enhance the collection of evidence for forensic analysis is to shift our focus away from servers, networks and applications and on to the actual information traversing our networks. By collecting this type of information we can reconstruct a complete picture of what occurred by deploying full packet capture capabilities at strategic points across the network infrastructure.

Fig 1: Biggest data breaches reported in history

Source: The Statistics Portal

UNCOMPROMISED PACKET CAPTURE

For reliable packet capture for forensic evidence, the first critical challenge to address is to how ensure every single packet is captured. Imagine you discover during a forensic investigation that you are missing the single piece that could complete the puzzle.

To prevent this from happening a high-speed uncompromised packet capture solution is needed. A high speed uncompromised packet capture solution must be able to capture every single packet, no matter the packet size and packet patterns, at the maximum network operating rate.

Fig 2: The missing piece of evidence

When doing packet capture on a fully utilized 10Gbps network link 1.23Gbyte of new packet data must be written and up to 14.88 Million new records must be added to the packet capture database every second.

Fig: 3 Needle in the haystack

THE NEEDLE IN THE HAYSTACK

The second challenge to overcame is how to find the relevant information for your forensic investigation in a packet capture database with a size of serval hundred TBytes or even PBytes and with billions of individual records. This can best be described as finding the famous needle in the haystack.

Of course going through the entire packet capture database one record at a time is not a viable solution. Instead the packet data must be indexed as it is written to the packet capture database to enable fast searching. The most common ways of indexing the packet data is on reception time, addresses, protocol number and port numbers.

Indexing by reception time will enable us to quickly find all packet data captured within a certain timeframe and indexing by addresses, protocol number and port numbers will enable us to quickly find all packet data exchanged by either one user or between two users. The various types of indexes can also be combined allowing us to search fast for all data exchanged between two parties within a given time window.

Indexing packet data on reception time, address, protocol number and port numbers is an efficient way to find packet data for a forensic investigation quickly. Efficiency can be improved further by associating every packet data origination from a given communication session with a unique session id and indexing all packet data by their unique session id. Doing this will quickly find all data packets belonging to a given session between two entities like in a specific YouTube video playback.

Finding data packets of interest quickly is not the only challenge to solve. Getting the relevant packet data retrieved from the packet capture solution and into the hands of the forensic network security team for analysis as fast as possible is also important.

Why is fast retrieval important? Imagine investigating a possible security breach and quickly identifying some suspicious packet data in the packet capture database, only to then spend several hours retrieving the suspicious packet data. Firstly, this will prevent the forensic network security team from making progress until the retrieval process is complete and secondly, there is a chance that the suspicious packet data will be overwritten by newly captured packets before the retrieval process is done.

WHAT IS SUFFICIENT EVIDENCE?

By deploying packet capture capabilities, we can travel back in time. We now have a complete picture of what happened 10 minutes, 1 hour, 1 day, 1 week, 1 month or 1 year ago on the network. The big question is how far back in time must we be able to travel?

No Chief Information Officer (CIO) wants to be in a situation where a possible cyber security attack cannot be investigated due to insufficient packet capture history. In the Target case, the attackers were present in the Target IT infrastructure for more than 200 days before the data breach was discovered and a detailed investigation was initiated.

Fig 4: Sufficient evidence

To understand how much data storage capacity, we need to obtain different levels of packet capture history we must determine how much packet data an average organization or enterprise is generating. Let us assume the small/medium enterprise will generate an average network load of 750Mbps across a 24hour window and a large enterprise will generate 5Gbps under the same conditions. Based on the two defined enterprises we can calculate the minimum required data storage capacity for 1 day, 1 week, 1 month and 1 year of packet capture storage.

Fig 5: Packet Capture Storage Size

Fig 5: Packet Capture Storage Size

Majority of the packet capture solutions available today can scale to 1000TB of data storage which equals roughly 4 months of packet data history for a small/medium enterprise and less than a month for a large enterprise.

In order to further expand the packet data history, the packet data can be compressed before it is written to the packet capture database. The effect of compressing the packet data depends on the selected compression algorithm and the content of the data packet as certain data is more suited for compression than other. Standard network packet data have a typical compression ratio of 3, hence compression can triple the packet capture history.

FINAL COMMENTS

Deploying packet capture capabilities is an extremely effective weapon in the ever increasing battle against critical data breaches caused by cyber-attacks. But as described above, a few challenges must be addressed in order to have a successful packet capture solution.

Finally, the financial investment needed for this should also be considered. The two biggest cost drivers are: number of packet capture systems and size of packet data history. A single packet capture system can cost up to 250k USD depending on the exact system configuration and a storage solution in the PByte range could cost more than 1m USD.

Investing in packet capture capabilities is like taking out life insurance. You want to have the best achievable coverage given the amount of money you are ready to spend. You plan to never use the insurance and should the worst happen, only your relatives would know if you were covered.

I am certain that in the Target case many people and especially the Target executive management team would have preferred a cyber security defense solution that could’ve givem them a quick understanding of the reported security breach.

TAGS

- 100G

- cybersecurity

- pcap

- evidence

- analysis

- data

- breach